The Toolkit for Digital Liberation

A Bit of Background and Truth.

Algorithms don't just reflect power — they protect it.

I've spent over 20 years in strategic communications, working across journalism at ABC News, MTV, and BET, leading crisis communications for Airbnb.org's refugee response, transforming digital infrastructure at the NYC Housing Authority for 400,000 residents, and building brand strategy for cultural institutions. I teach AI Marketing Ethics. I hold a credential in Ethical AI from Cambridge.

I tell you all of that to say: I'm not theorizing about algorithmic harm. I'm testing it, documenting it, and living it.

When I started creating content that criticized the very platforms I was posting on, my YouTube channel went from 58,000 views a month to 206. YouTube's own AI admitted — in writing — that it served my content to hostile audiences and used their rejection to justify burying it further. Then Google started calling me three times a week trying to sell me ads to buy back the reach they took.

I ran a controlled test. Same podcast. Same voice. Same format. The only variable was whether my face was visible. The faceless version got over 100 views in two hours. The version showing my Black face? Two views. A 50x difference.

Your face is the variable. And that is the cost to our future.

Who Built This.

The Toolkit was created for, "Muted on Arrival: Suppression, Platforms, and the Black Creator Economy" panel at Black Week NYC in October 2025. The concept was born out of the panel’s contributors including writer, Chereese Sheen and supported by Color of Change.

As the founder of The Ajayi Effect, I led the strategic framework and content development for this Toolkit because I believe that if access isn't at the center, I won't be. This work sits at the intersection of everything I do — AI ethics, brand strategy, mission-driven innovation, and the belief that the communities most impacted by technology are the ones with the most authority to shape it.

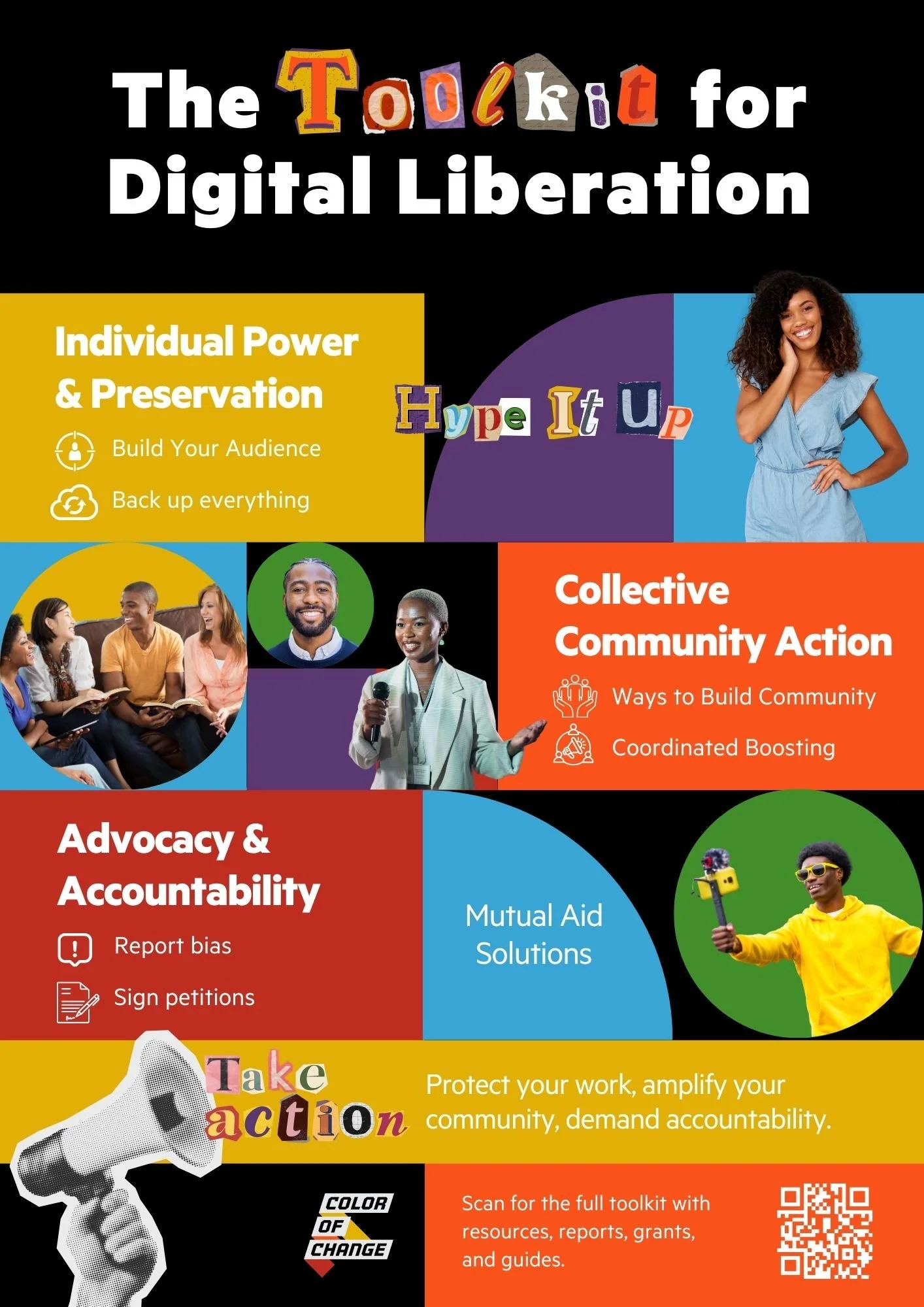

Three Pillars for Fighting Back

Protect Yourself. Diversify your platforms. Monetize directly. Build an audience you own through email and newsletters no algorithm can touch. Back up everything with the 3-2-1 rule — three copies, two storage types, one offsite. If they delete you tomorrow, your work still exists.

Amplify Each Other. Community is the algorithm workaround. The Toolkit lays out how to run coordinated engagement campaigns — choosing one piece of content per week to collectively boost. When communities organize behind each other, suppression breaks. We've seen it work in real time.

Demand Accountability. Algorithmic bias shapes hiring, housing, healthcare, education, and criminal justice. The Toolkit gives you a step-by-step guide to documenting and reporting bias to the FTC, the EEOC, the CFPB, and local regulators. In New York City, Local Law 144 already requires auditing of AI hiring tools. The tools exist. We need people filing.

This Is Bigger Than Content

This is about who gets to be visible. Who gets to build wealth. Who gets to tell their own story.

The same companies suppressing our voices online are building data centers in our neighborhoods, driving up our utility bills, and consuming our water. Digital extraction doesn't stay digital.

The Toolkit for Digital Liberation exists because platforms will not protect us. So we protect each other.

Behind the scenes; Muted on Arrival: Blackweek 2025.